Search News

Industry Portal

Popular Tags

Industrial benchmarking is misleading without scrap rate context

Author

Time

Click Count

Industrial benchmarking often fails when scrap rate data is missing, distorting comparisons across AI-driven manufacturing, digital supply chain performance, and industrial sustainability goals. For researchers and operators navigating manufacturing technology, smart materials, and automation technology, understanding this hidden variable is essential. This article explains why scrap rate context is critical to supply chain intelligence, industrial convergence, and more reliable industrial intelligence.

Why scrap rate context changes the meaning of industrial benchmarking

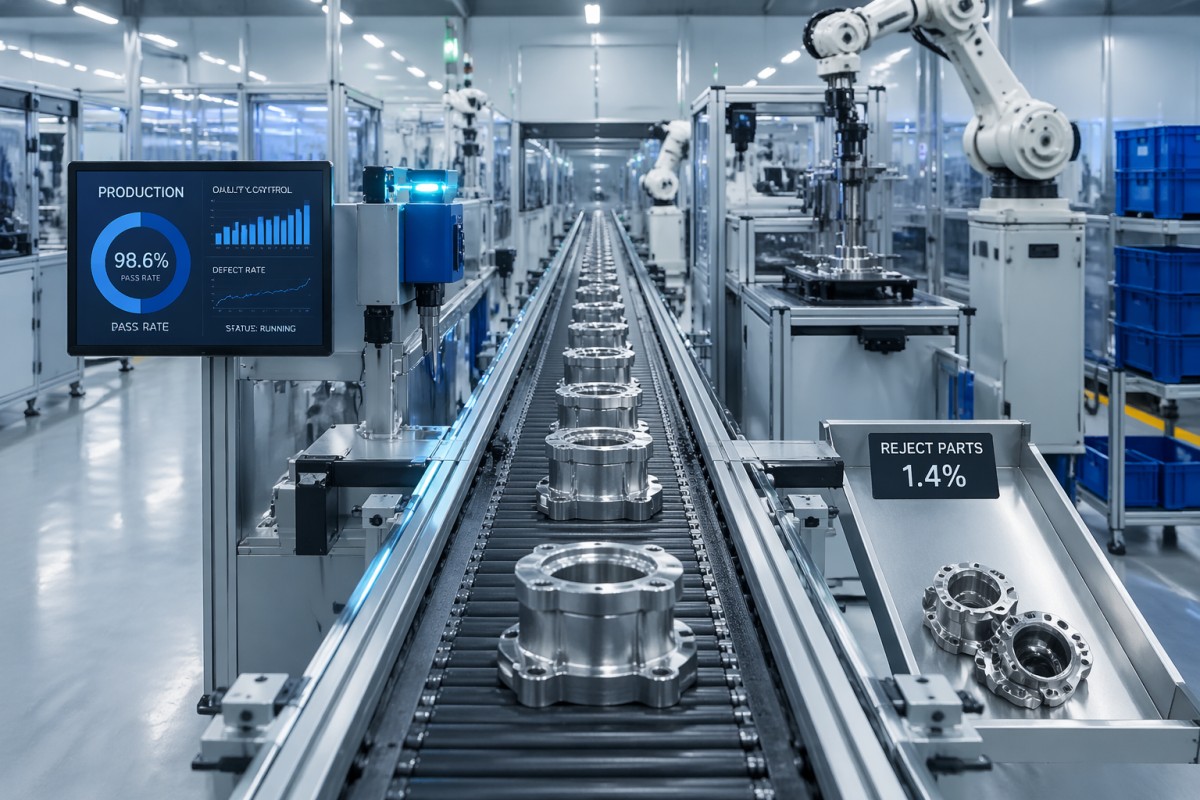

Industrial benchmarking is often presented as a clean comparison of cycle time, output per hour, energy intensity, labor utilization, or on-time delivery. In practice, those indicators can be misleading when scrap rate is missing. A line producing 1,000 units per shift and discarding 8% of output is not operationally equivalent to a line producing 940 units with a 1.5% scrap rate. For procurement teams, technical evaluators, and plant operators, the difference affects cost, planning stability, and material efficiency.

This issue matters across the broader industrial ecosystem because the same benchmark can support very different decisions. A researcher may use it to map process capability. An operator may use it to judge line performance over 2–4 weeks. A supply chain planner may use it to estimate replenishment risk each month or each quarter. Without scrap rate context, all three users can reach the wrong conclusion from the same dashboard.

In AI-driven manufacturing, the risk becomes larger. Predictive systems can optimize throughput and scheduling, but if scrap is treated as a separate quality event rather than a benchmark variable, digital intelligence may overstate actual productive capacity. The result is a false view of asset utilization, material conversion, and production readiness. This is exactly where multidisciplinary benchmarking repositories such as G-AIE add value: they connect material science, automation signals, and supply chain intelligence into one usable reference frame.

A practical benchmark should therefore compare at least 4 layers together: gross output, good output, scrap rate, and scrap classification. In many sectors, the useful comparison window is not one day but 7–30 days, because scrap can spike during material changes, tool wear, maintenance resets, or new operator shifts. That wider context produces a more realistic industrial benchmark.

What scrap rate actually changes in benchmark interpretation

Scrap rate influences more than quality. It changes the economics of material use, the reliability of promised lead times, and the credibility of automation performance claims. A process with high first-pass yield usually needs less rework, fewer manual interventions, and fewer urgent material calls. That affects labor planning, warehouse buffers, and supplier coordination across the full manufacturing network.

- Capacity interpretation: a machine running at 90% utilization may deliver much less sellable output if scrap remains above the acceptable threshold.

- Material cost interpretation: high-value alloys, engineered polymers, coatings, or composite inputs can turn a small scrap increase into a major cost event.

- Sustainability interpretation: reported energy per finished unit changes when rejected units are included correctly.

- Supply chain interpretation: replenishment models and safety stock calculations become distorted when scrap is underreported or excluded.

For information researchers, this means benchmark data should never be read in isolation. For operators, it means performance reviews need a shared definition of scrap boundaries: startup loss, setup waste, trim loss, process defects, and material contamination should not be mixed into one undefined number. A benchmark without those distinctions is too weak for serious decision-making.

Where missing scrap rate creates the biggest decision errors

The most common mistake is comparing suppliers, plants, or automation cells by nominal output alone. This can happen in electronics assembly, precision machining, advanced materials processing, packaging, or multi-step component conversion. A site that appears faster may actually consume more raw material per accepted unit, create more downstream inspection load, and introduce more schedule volatility over a 3-stage production chain.

This problem is especially visible in cross-regional sourcing. Benchmark data from one facility may include startup waste and quality holds, while another facility reports only steady-state production. The comparison looks objective, yet the reporting logic is inconsistent. For procurement managers and operating teams working under tight delivery windows of 2–6 weeks, that mismatch can lead to unrealistic supplier selection or incorrect internal capacity assumptions.

G-AIE’s industrial intelligence perspective is useful here because convergence matters. Material behavior, machine settings, AI control loops, inspection sensitivity, and logistics timing all interact. Scrap rate is not simply a quality metric; it is a convergence metric. It shows whether physical assets and digital intelligence are aligned well enough to generate repeatable, sellable output.

For operators, the hidden damage often appears later. The line seems efficient on paper, but changeovers take longer, bins fill with rejected parts, inspectors spend extra time on borderline units, and replenishment signals become noisy. A benchmark that excludes these realities may support a good presentation, but not a stable production system.

Typical scenarios where benchmark distortion becomes costly

The table below summarizes common industrial scenarios where missing scrap rate context leads to flawed benchmarking and poor operational judgment. These cases are relevant to mixed-industry environments where automation, materials, and supply chain timing must be assessed together.

The pattern is consistent: when scrap rate is excluded, operational comparisons drift away from commercial reality. That is why benchmark reviews should include usable output definitions, scrap categories, and the time window used for measurement before any sourcing, investment, or process decision is finalized.

A simple operator check before trusting a benchmark

Before accepting benchmark claims, many operators use a 5-point check: measurement period, defect classification, startup inclusion, rework handling, and good-output basis. This takes little time, yet it can reveal whether a benchmark reflects actual plant performance or only a selective reporting slice. In most cases, this check can be completed within 1 shift review or a single weekly performance meeting.

How to benchmark fairly across plants, suppliers, and automation systems

Fair industrial benchmarking does not require perfect data, but it does require consistent definitions. A useful approach is to normalize comparisons around sellable output and process conditions. Instead of asking which line runs faster, ask which line delivers the lowest cost of accepted units within the required tolerance band, over a comparable operating window such as 2 shifts, 7 days, or 30 days depending on process variability.

For mixed industrial environments, at least 3 metric families should be aligned: throughput metrics, quality metrics, and material-efficiency metrics. If digital supply chain systems track only the first family, decision-makers can overestimate available capacity. If they track quality but not material loss, sustainability and cost signals remain incomplete. G-AIE supports a stronger approach by integrating asset performance data with material context and technical benchmarking logic.

This matters during procurement and internal capacity planning. A benchmark should not only answer whether a process can run. It should answer whether it can run reliably under the intended product mix, batch size, operator profile, and material conditions. Small-batch, medium-batch, and large-batch production often show different scrap behavior, especially during setup, tool changes, and recipe transitions.

The goal is comparability, not complexity. A benchmark that includes a few core variables consistently is more valuable than a large dashboard with undefined data. In many industrial reviews, 6 acceptance variables are enough to prevent major interpretation errors.

Recommended benchmark framework for practical decision-making

The following framework helps researchers, sourcing teams, and operators compare lines or suppliers without losing scrap rate context. It is designed for broad industrial use where material science and automation performance intersect.

When these dimensions are aligned, benchmark results become far more actionable. Teams can identify whether underperformance is driven by machine instability, material inconsistency, operator transition, or inspection threshold changes. That distinction is critical for both process improvement and supplier evaluation.

A 4-step implementation path

- Define benchmark scope: asset, product family, batch type, and time window.

- Separate gross output, accepted output, rework, and true scrap categories.

- Normalize material and automation conditions before cross-site comparison.

- Review decisions against cost, delivery risk, and sustainability impact together.

This 4-step model is often sufficient for early-stage intelligence work and can be expanded later for more detailed digital supply chain analytics.

Procurement and operator guide: what to ask before accepting benchmark claims

For buyers and technical users, the strongest benchmark question is not “What is your output?” but “What is your accepted output under stable and unstable conditions?” That single shift in wording changes the quality of supplier conversations. It also reduces the risk of overcommitting to a source that looks efficient in presentations but performs inconsistently during ramp-up, changeover, or material variation.

Procurement teams should request benchmark evidence over more than one operating state. In many industrial settings, useful evidence includes normal production, post-changeover production, and early ramp production. If a supplier or internal plant can only provide best-case data from one narrow window, the benchmark is incomplete. A realistic review typically covers at least 3 production conditions and 5 key checks.

Operators should also verify how scrap is captured in control systems. If inspection systems classify borderline parts differently across shifts, reported scrap rate may reflect rule settings rather than process quality. In AI-supported environments, this is especially important because model tuning, anomaly thresholds, and inspection retraining can change apparent benchmark results without any physical equipment improvement.

G-AIE is positioned to help in this evaluation because the platform focus is not limited to isolated machine metrics. It supports institutional users who need benchmarking tied to materials, automation maturity, and supply chain resilience. That is highly relevant when benchmark data must support sourcing decisions, technical due diligence, or cross-functional plant reviews.

5 key checks for benchmark-based sourcing or plant comparison

- Confirm whether scrap includes startup, shutdown, and changeover losses. These can materially alter benchmark interpretation in short runs.

- Ask for good-output yield by batch type. Small-batch and large-batch performance should not be merged into one number.

- Check whether reworked units are reported separately from first-pass accepted units. They represent different labor and risk profiles.

- Review material assumptions, including grade consistency and handling conditions. Variable inputs can inflate scrap independently of equipment capability.

- Link benchmark data to delivery promises. A strong benchmark should survive real scheduling pressure, not only controlled test runs.

These checks are practical, not theoretical. They help teams avoid false comparisons and support better product selection, line assessment, and quote evaluation. For many B2B industrial buyers, they also improve negotiations by shifting the discussion from headline speed to usable, dependable output.

Common misconceptions, future benchmarking trends, and why to work with G-AIE

One common misconception is that scrap rate only matters to quality teams. In reality, it affects finance, procurement, planning, sustainability, and automation engineering at the same time. Another misconception is that low reported scrap always signals strong process control. Sometimes it only signals narrow reporting rules, aggressive rework classification, or selective measurement windows. That is why context is essential.

A second misconception is that digital manufacturing systems automatically solve benchmark quality. They do not. Better sensors and AI models can improve visibility, but they can also create a false sense of precision if the benchmark framework itself is weak. Over the next 12–36 months, the most useful industrial intelligence will likely combine machine data, material behavior, and accepted-output economics rather than relying on isolated throughput metrics.

This trend aligns with the broader shift toward resilient industrial ecosystems. As companies balance Vertical AI, advanced materials, and supply chain volatility, benchmark quality becomes a strategic capability. Reliable industrial benchmarking will increasingly require shared data definitions, cross-functional review, and better linkage between process physics and digital decision models.

For organizations that need a more credible basis for comparison, G-AIE offers a strong institutional advantage. Its focus on the convergence of material science and intelligent automation helps users interpret industrial benchmarks in a way that is useful for sourcing, technical assessment, and operational improvement. This is particularly valuable when decisions must account for scrap behavior, automation logic, sustainability goals, and global supply chain resilience together.

FAQ: practical questions from researchers and operators

How much benchmark history is usually enough?

For stable, mature lines, 7–30 days is often more useful than a single day because it captures routine variation. For new launches or variable materials, a longer window may be necessary. The goal is to include normal production, at least one transition condition, and any recurring scrap pattern.

Is scrap rate still important when AI inspection is highly advanced?

Yes. Advanced inspection improves visibility but does not remove material loss or process instability. In some cases, tighter inspection can initially raise reported scrap because defect detection becomes more sensitive. That does not make benchmarking worse, but it does make clear definitions more important.

What should operators watch first when benchmark numbers look unusually strong?

Start with three checks: whether the numbers are gross or accepted output, whether startup losses are excluded, and whether rework is combined with first-pass yield. Those three points often explain why one benchmark appears better than another.

Can scrap rate context help with sustainability reporting?

Absolutely. Energy, water, and material-use indicators become more meaningful when calculated per accepted unit rather than per gross unit. This gives a more realistic view of resource efficiency and supports stronger internal decision-making around process improvement and sourcing.

Why choose us for industrial benchmarking support

If your team is evaluating suppliers, reviewing plant performance, or building a more reliable digital supply chain benchmark, G-AIE can help structure the right comparison logic before decisions are locked in. You can consult on benchmark parameters, scrap classification, accepted-output definitions, product selection criteria, material-context interpretation, and cross-site comparison methods.

We also support practical decision points that matter in B2B operations: expected delivery windows, technical due diligence scope, customized benchmarking frameworks, sample or pilot evaluation logic, and quotation discussions linked to true production efficiency rather than headline output. This makes the conversation useful for both information researchers and plant-level users.

When benchmark data needs to support sourcing, operations, and long-term industrial resilience at the same time, context is the difference between a presentation metric and a decision-grade metric. Contact G-AIE to discuss your benchmark framework, validation checklist, comparison scope, or material-and-automation intelligence needs.

- material science

- intelligent automation

- Vertical AI

- industrial ecosystem

- technical benchmarking

- digital intelligence

- industrial resilience

- advanced materials

- industrial intelligence

- automation technology

- industrial benchmarking

- supply chain intelligence

- manufacturing technology

- industrial convergence

- smart materials

- AI-driven manufacturing

- industrial sustainability

- digital supply chain

Recommended News